What is Spark?

Spark is “a fast and general engine for large-scale data

processing”. – http://spark.apache.org/

Spark is also one of the most popular open source frameworks

for big data, based on number of contributors. Let us find out why this is the case.

When do you use Spark?

Suppose you would like to analyze a data set: perform ETL or

data munging, then run SQL queries such as grouping and aggregations against

the data, and maybe apply a machine learning algorithm. When the data size is

small, everything will run quickly on a single machine, and you can use analysis

tools like Pandas (Python), R, or Excel, or write your own scripts. But, for

larger data sets, data processing will be too slow on a single machine, and

then you will want to move to a cluster of machines. This is when you would use

Spark.

You could probably benefit from Spark if:

- Your data is currently stored in Hadoop / HDFS.

- Your data set contains more than 100 million rows.

- Ad-hoc queries take longer than 5 minutes to complete.

What Spark is Not, typical architecture

Spark can be a central component to a big data system, but

it is not the only component. It is not a distributed file system: you would

typically store your data on HDFS or S3. Nor is Spark a NoSQL database: Cassandra

or HBase would be a better place for horizontally scalable table storage. And,

it is not a message queue: you would use Kafka or Flume to collect streaming

event data. Spark is, however, a compute engine which can take input or send

output to all of these other systems.

How do you use Spark?

Implemented in Scala, Spark can be programmed in Scala,

Java, Python, SQL, and R. However, not all of the latest functionality is

immediately available in all languages.

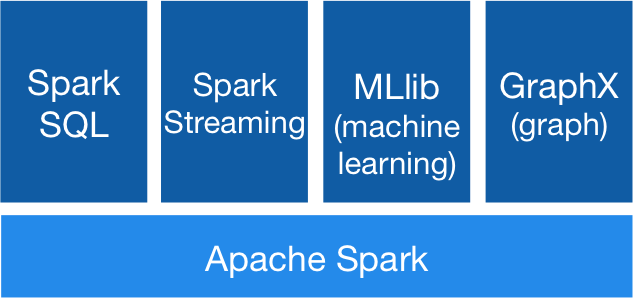

What kind of operations does Spark support?

Spark SQL. Spark supports batch operations involved in ETL and

data munging, via the DataFrame API. It supports parsing different input formats,

such as JSON or Parquet. Once the raw data is loaded, you can easily compute

new columns from existing columns. You can slice and dice the data by filtering,

grouping, aggregating, and joining with other tables. Spark supports relational

queries, which you can express in SQL or through the DataFrame API.

Spark Streaming. Spark also provides scalable stream

processing. Given an input data stream, for example, coming from Kafka, Spark allows

you to perform operations on the streaming data, such as map, reduce, join, and

window.

MLlib. Spark includes a machine learning library, with

scalable algorithms for classification, regression, collaborative filtering, clustering,

and more. If training your dataset on a single machine takes too long, you

might consider cluster computing with Spark.

GraphX. Finally, GraphX is a component in Spark for scalable

batch processing on graphs.

As you may have noticed by now, Spark processing is batch

oriented. It works best when you want to perform the same operation on all of

your data, or a large subset of your data. Even with Spark Streaming, you

operate on small batches of the data stream, rather than one event at a time.

Spark vs. Hadoop MapReduce, Hive, Impala

How does Spark compare with other big data compute engines? Unlike

Hadoop MapReduce, Spark caches data in memory for huge performance gains when

you have ad-hoc queries or iterative workloads, which are common in machine

learning algorithms. Hive and Impala both run SQL queries at scale; the advantage

of Spark over these systems is (1) the convenience of writing both queries and UDFs

in the same language, such as Scala, and (2) support for machine learning

algorithms, streaming data, and graph processing within the same system.