Saturday, September 12, 2015

Attending Grace Hopper Conference

I will be attending the Grace Hopper Celebration of Women in Computing this October, in Houston, TX. I am looking forward to connecting with other women in data science and big data. I would love to compare notes on Spark, Scala, Databricks, and machine learning. I would also like to meet others doing agile and scrum, or leading data science projects. There will be talks on professional development, and I will be leading a discussion on the impact of maternity leave on a woman's career. Last, but not least, I will be happy to discuss jobs at Samsung SDSA with job seekers.

Thursday, June 18, 2015

Reading JSON data in Spark DataFrames

Overview

Spark DataFrames makes it easy to read from a variety of data formats, including JSON. In fact, it even automatically infers the JSON schema for you. Once the data is loaded, however, figuring out how to access individual fields is not so straightforward. This post will walk through reading top-level fields as well as JSON arrays and nested objects. The code provided is for Spark 1.4. Update: please see my updated post on an easier way to work with nested array of struct JSON data.

Load the JSON File

Let's begin by loading a JSON file, where each line is a JSON object:

{"name":"Michael", "cities":["palo alto", "menlo park"], "schools":[{"sname":"stanford", "year":2010}, {"sname":"berkeley", "year":2012}]}

{"name":"Andy", "cities":["santa cruz"], "schools":[{"sname":"ucsb", "year":2011}]}

{"name":"Justin", "cities":["portland"], "schools":[{"sname":"berkeley", "year":2014}]}

The Scala code to read a JSON file:

>> val people = sqlContext.read.json("people.json")

people: org.apache.spark.sql.DataFrame

Read a Top-Level Field

With the above command, all of the data is read into a DataFrame. In the following examples, I will show how to extract individual fields into arrays of primitive types. Let's start with the top-level "name" field:

>> val names = people.select('name).collect()

names: Array[org.apache.spark.sql.Row] = Array([Michael], [Andy], [Justin])

>> names.map(row => row.getString(0))

res88: Array[String] = Array(Michael, Andy, Justin)

Use the select() method to specify the top-level field, collect() to collect it into an Array[Row], and the getString() method to access a column inside each Row.

Flatten and Read a JSON Array

Update: please see my updated post on an easier way to work with nested array of struct JSON data.

Next, notice that each Person has an array of "cities". Let's flatten these arrays and read out all their elements.

Next, notice that each Person has an array of "cities". Let's flatten these arrays and read out all their elements.

>> val flattened = people.explode("cities", "city"){c: List[String] => c}

flattened: org.apache.spark.sql.DataFrame

>> val allCities = flattened.select('city).collect()

allCities: Array[org.apache.spark.sql.Row]

>> allCities.map(row => row.getString(0))

res92: Array[String] = Array(palo alto, menlo park, santa cruz, portland)

The explode() method explodes, or flattens, the cities array into a new column named "city". We then use select() to select the new column, collect() to collect it into an Array[Row], and getString() to access the data inside each Row.

Read an Array of Nested JSON Objects, Unflattened

Finally, let's read out the "schools" data, which is an array of nested JSON objects. Each element of the array holds the school name and year:

>> val schools = people.select('schools).collect()

schools: Array[org.apache.spark.sql.Row]

>> val schoolsArr = schools.map(row => row.getSeq[org.apache.spark.sql.Row](0))

schoolsArr: Array[Seq[org.apache.spark.sql.Row]]

>> schoolsArr.foreach(schools => {

>> schools.map(row => print(row.getString(0), row.getLong(1)))

>> print("\n")

>> })

(stanford,2010)(berkeley,2012)

(ucsb,2011)

(berkeley,2014)

Use select() and collect() to select the "schools" array and collect it into an Array[Row]. Now, each "schools" array is of type List[Row], so we read it out with the getSeq[Row]() method. Finally, we can read the information for each individual school, by calling getString() for the school name and getLong() for the school year. Phew!

Summary

In this blog post, we have walked through accessing top-level fields, arrays, and nested JSON objects from JSON data. The key classes involved were DataFrame, Array, Row, and List. We used the select(), collect(), and explode() DataFrame methods, and the getString(), getLong(), and get Seq[T]() Row methods to read data out into arrays of primitive types.

Saturday, May 23, 2015

GraphX: Graph Computing for Spark

Overview

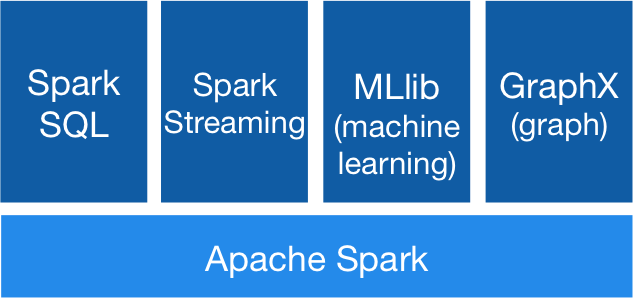

I've been reading about GraphX, Spark's graph processing library. GraphX provides distributed, in-memory graph computing. The key thing that differentiates it from other large-scale graph processing sytems, like Giraph and GraphLab, is that it is tightly integrated within the Spark ecosystem. This allows efficient data pipelines that combine ETL (SQL), machine learning, and graph analysis within one framework (Spark), without the overhead of running multiple systems and copying data between them. |

| The Spark stack. |

Graph Library for the Spark Framework

Graphs in GraphX are directed multigraph property graphs, which means that each vertex and each edge can have properties (attributes) associated with it. GraphX graphs are distributed and immutable. You create a graph in GraphX by providing an RDD of vertices and an RDD of edges. You can then perform OLAP operations on a graph through the API. A pregel API supports vertex-centric, bulk-synchronous parallel, iterative algorithms.In-memory indexes speed up graph operations. Edge partitioning (which means vertices can be split across partitions) and vertex data replication speed up edge traversal, which usually involves communication across machines. A 2014 research paper shows performance comparable to other graph systems, Giraph and GraphLab.

|

| GraphX is built on RDDs. |

Applications

A couple of recent MLLib algorithms are implemented on GraphX: LDA topic modeling and Power Iteration Clustering. Alibaba Taobao uses GraphX for data mining in ecommerce, modeling user-item-merchant interactions as a graph. Netflix uses GraphX for movie recommendation, with graph diffusion and LDA clustering algorithms.Friday, April 24, 2015

Spark for Exploratory Data Analysis?

Python and R have been known for their data analysis packages and environments. But, now that Spark supports DataFrames, will it be possible to do exploratory data analysis with Spark? Assuming the production system is implemented in Spark for scalability, it would be nice to do the initial data exploration within the same framework.

At first glance, all the major components are available. With Spark SQL, you can load a variety of different data formats, such as JSON, Hive, Parquet, and JDBC, and manipulate the data with SQL. Since the data is stored in RDDs (with schema), you can also process it with the original RDD APIs, as well as algorithms and utilities in MLLib.

Of course, the details matter, so without having done a real world project in this framework, I have to wonder: what is missing? Is there a critical data frame function in Pandas or R, that is not yet supported in Spark? Are there other missing pieces that are critical to real world data analysis? How difficult is it to patch up those missing pieces by linking in external libraries?

At first glance, all the major components are available. With Spark SQL, you can load a variety of different data formats, such as JSON, Hive, Parquet, and JDBC, and manipulate the data with SQL. Since the data is stored in RDDs (with schema), you can also process it with the original RDD APIs, as well as algorithms and utilities in MLLib.

Of course, the details matter, so without having done a real world project in this framework, I have to wonder: what is missing? Is there a critical data frame function in Pandas or R, that is not yet supported in Spark? Are there other missing pieces that are critical to real world data analysis? How difficult is it to patch up those missing pieces by linking in external libraries?

Thursday, December 4, 2014

Lightbeam: Shines a Light on Creepy Ads

Have you ever had a creepy ad experience? Have you gone online to search for lodging in Lake Tahoe, and then two minutes later received an email from Groupon for a "Tahoe weekend getaway"? Sure, many companies advertise online, but Groupon ads seem extremely targeted. So, I was curious: "how much is Groupon tracking me?"

To investigate the extent of Groupon's tracking, I installed the Lightbeam add-on to my Firefox browser. This add-on, released by Mozilla about a year ago, shows all the third-party cookies and connections made by websites, in an interactive visualization. After browsing to a few websites (Yahoo, Google, Groupon), I could see that Groupon contacted by far the largest number of third-party domains for ad tracking, 17 in all, 8 of which also created a cookie.

Lightbeam Shows Third-Party Tracking

One way that websites target ads is by tracking a user's online behavior. When a user visits a website, the web page may execute tracking scripts and store cookies, which allow third-party ad networks to track that user. These ad networks can then collect information whenever the user visits another website that partners with them. (More info here.)To investigate the extent of Groupon's tracking, I installed the Lightbeam add-on to my Firefox browser. This add-on, released by Mozilla about a year ago, shows all the third-party cookies and connections made by websites, in an interactive visualization. After browsing to a few websites (Yahoo, Google, Groupon), I could see that Groupon contacted by far the largest number of third-party domains for ad tracking, 17 in all, 8 of which also created a cookie.

|

| Groupon (the green "G") made the most third-party connections, as shown in Lightbeam. |

Third-party Domains

What are these third-party domains that Groupon connected with?- Google Tag Manager: "Tags are tiny bits of website code that let you measure traffic and visitor behavior."

- Criteo: "Personalized retargeting company."

- Advertising.com: "The most mature ad optimization and bid management system in the business."

- Rubicon Project: "Leading the automation of advertising."

- Doubleclick: Internet ad serving, subsidiary of Google.

- PubMatic: "Programmatic advertising."

- Yahoo

- Channel Intelligence (Google): "Optimize your data and sell more products."

- Nanigans: "The industry-leading advertising automation platform."

- Atwola: "Atwola is a cookie that is used to track user browsing history."

Curious about ad tracking in websites you visit? Check out the Lightbeam Firefox add-on.

Sunday, September 28, 2014

Higgs Data Challenge: Particle Physics meets Machine Learning

The HiggsML Challenge on Kaggle

How do you frame a particle physics problem as a data challenge? Will it be accessible to Kagglers? Will data scientists be able to outperform physicists with machine learning? (as was the case in the NASA dark matter challenge.) The Kaggle Higgs competition recently came to a close with great results. |

| The HiggsML challenge: when High Energy Physics meets Machine Learning. |

|

| Competition summary. |

A Particle Physics Data Challenge

Goal: Classify collision events

The goal of the competition was to classify 550,000 proton-proton collision events as either originating from a Higgs boson decay (a "signal" event) or not (a "background" event). This type of analysis can be used in experiments at the Large Hadron Collider to discover decay channels of the Higgs boson.Data: Tracks of particles produced in each collision

Each proton-proton collision, or event, produces a number of outgoing particles, whose tracks are recorded by a particle detector. The training data consisted of 250,000 labelled events, obtained from simulations of the collisions and detector. For each event, 30 features based on particle track data, such as particle mass, speed, and direction, were provided. The actual experimental data is highly unbalanced (much more background than signal), but the training data provided was fairly balanced. |

| Particle tracks: a Higgs boson decays into two τ particles, each of which subsequently decays into either an electron (blue line) or a muon (red line). ATLAS Experiment © 2014 CERN. |

Evaluation Metric: Significance of discovery

Instead of a standard performance metric, submissions were evaluated by Approximate Median Significance (AMS), a measure of how well a classifier is able to discover new phenomena.When a classifier labels events (in experimental data, not simulations) as "signal", the p-value is the probability that those events were actually just background events. The corresponding "significance" (Z) is the normal quantile of that p-value, measured in units of sigma. We want a small p-value and large "significance", before claiming a discovery. Finally, AMS is an estimate of the "significance" for a given classifier. For more details, see the Kaggle evaluation page.

Making Particle Physics Accessible

The Organizers

The contest organizers did a fantastic job of designing and organizing this competition. A group of physicists and machine learning researchers, they took a difficult subject, particle physics, and spent 18 months creating a data challenge that ultimately attracted 1,785 teams (a Kaggle record).Their success can be attributed to the following:

- Designing a dataset that could be analyzed without any domain knowledge.

- Providing multiple software starter kits that made it easy to get an analysis up and running.

- Providing a 16-page document explaining the challenge.

- Answering questions promptly on the Kaggle discussion forum.

|

| Not needed to win the contest. "Higgs-gluon-fusion" by TimothyRias. Licensed under Creative Commons Attribution-Share Alike 3.0. |

The Results

Did machine learning give data scientists an edge? How did the physicists fare?Cross validation was key

It was not machine learning, but the statistical technique of cross validation, that was key to winning this competition. The AMS metric had a high variance, which made it difficult to know how well a model was performing. You could not rely on the score on the public leaderboard because it was calculated on only a small subset of the data. Therefore, a rigorous cross validation (CV) method was needed for model comparison and parameter tuning. The 1st-place team, Gabor Melis, did 2-fold stratified CV repeated 35 times on random shuffles of the training data. Both the 1st-place and 4th-place teams mentioned cross validation as key to a high score on the forum.Insufficient CV could lead to overfitting and large shake-ups in the final ranking, as can be seen in a meta analysis of the competition scores.

|

| Overfitting led to shake-ups in the final ranking. "Overfitting svg" by Gringer. Licensed under Creative Commons Attribution 3.0 |

Feature engineering

Feature engineering was less important in this contest, having a small impact on the score. The reason was the organizers had already designed good features, such as those used in the actual Higgs discovery, into the provided dataset. Participants did come up with good features, such as a feature named “Cake”, which discriminated between the signal and one major source of background events, the Z boson.Software and Algorithms

The software libraries and algorithms discussed on the discussion forum were mostly standard ones: gradient tree boosting, bagging, Python scikit-learn, and R. The 1st-place entry used a bag of 70 dropout neural networks (overview, model and code). Early in the competition, Tianqi Chen shared a fast gradient boosting library called XGBoost, which was popular among participants. |

| The 1st-place entry used a bag of 70 dropout neural networks. "Colored neural network" by Glosser.ca. Licensed under Creative Commons Attribution-Share Alike 3.0 |

Particle Physics meets Machine Learning

The organizers' overall goal was to increase the cross fertilization of ideas between the particle physics and machine learning communities. In that respect, this challenge was a good first step.Physicists who participated began to appreciate machine learning techniques. This quote from the forum summarizes that sentiment: "The process of attempting as a physicist to compete against ML experts has given us a new respect for a field that (through ignorance) none of us held in as high esteem as we do now."

On the machine learning side, one researcher, Lester Mackey, developed a method to optimize AMS directly. In scikit-learn, support for sample weights was added to the GradientBoostingClassifier, in part due to interest from this competition.

And, much more will be discussed at the upcoming NIPS workshop on particle physics and machine learning. There will also be a workshop on data challenges in machine learning.

|

| There will be a workshop on particle physics and machine learning at the NIPS machine learning conference. |

Personal Note

I studied physics at Caltech and Stanford / SLAC (Stanford Linear Accelerator Center), before switching to computer science. Therefore, I could not pass up the opportunity to participate in this challenge myself. This was my first Kaggle competition, in which I ranked in the top 10%.

Further Reading

- The challenge website, with supplementary information not found on Kaggle: The HiggsML Challenge

- Tim Salimans, placed 2nd, with a blend of boosted decision tree ensembles: details

- Courtiol Pierre, placed 3rd, with an ensemble of neural networks: details

- Lubos Motl, a theoretical physicist who ranked 8th, wrote several blog posts about the competition: initial post

- "Log0" ranked 23rd by using scikit-learn AdaBoostClassifier and ExtraTreesClassifier: forum post

- "phunter" ranked 26th with a single XGBoost model: What can a single model do?

- Darin Baumgartel shared code based on scikit-learn GradientBoostingClassifier: post

- Trevor Stephens, a data scientist, tried out hundreds of automatically generated features: Armchair Particle Physicist

- Bios of participants who reached the top of the public leaderboard: Portraits

- Balazs Kegl, one of the organizers, talked about the challenge in this video: CERN seminar

Thursday, June 12, 2014

Storm vs. Spark Streaming: Side-by-side comparison

Overview

Both Storm and Spark Streaming are open-source frameworks for distributed stream processing. But, there are important differences as you will see in the following side-by-side comparison.

Processing Model, Latency

Although both frameworks provide scalability and fault tolerance, they differ fundamentally in their processing model. Whereas Storm processes incoming events one at a time, Spark Streaming batches up events that arrive within a short time window before processing them. Thus, Storm can achieve sub-second latency of processing an event, while Spark Streaming has a latency of several seconds.

Fault Tolerance, Data Guarantees

However, the tradeoff is in the fault tolerance data guarantees. Spark Streaming provides better support for stateful computation that is fault tolerant. In Storm, each individual record has to be tracked as it moves through the system, so Storm only guarantees that each record will be processed at least once, but allows duplicates to appear during recovery from a fault. That means mutable state may be incorrectly updated twice.

Spark Streaming, on the other hand, need only track processing at the batch level, so it can efficiently guarantee that each mini-batch will be processed exactly once, even if a fault such as a node failure occurs. [Actually, Storm's Trident library also provides exactly once processing. But, it relies on transactions to update state, which is slower and often has to be implemented by the user.]

|

| Storm vs. Spark Streaming comparison. |

Summary

In short, Storm is a good choice if you need sub-second latency and no data loss. Spark Streaming is better if you need stateful computation, with the guarantee that each event is processed exactly once. Spark Streaming programming logic may also be easier because it is similar to batch programming, in that you are working with batches (albeit very small ones).Implementation, Programming API

Implementation

Storm is primarily implemented in Clojure, while Spark Streaming is implemented in Scala. This is something to keep in mind if you want to look into the code to see how each system works or to make your own customizations. Storm was developed at BackType and Twitter; Spark Streaming was developed at UC Berkeley.Programming API

Storm comes with a Java API, as well as support for other languages. Spark Streaming can be programmed in Scala as well as Java.Batch Framework Integration

One nice feature of Spark Streaming is that it runs on Spark. Thus, you can use the same (or very similar) code that you write for batch processing and/or interactive queries in Spark, on Spark Streaming. This reduces the need to write separate code to process streaming data and historical data. |

| Storm vs. Spark Streaming: implementation and programming API. |

Summary

Two advantages of Spark Streaming are that (1) it is not implemented in Clojure :) and (2) it is well integrated with the Spark batch computation framework.Production, Support

Production Use

Storm has been around for several years and has run in production at Twitter since 2011, as well as at many other companies. Meanwhile, Spark Streaming is a newer project; its only production deployment (that I am aware of) has been at Sharethrough since 2013.

Hadoop Distribution, Support

Storm is the streaming solution in the Hortonworks Hadoop data platform, whereas Spark Streaming is in both MapR's distribution and Cloudera's Enterprise data platform. In addition, Databricks is a company that provides support for the Spark stack, including Spark Streaming.

Cluster Manager Integration

Although both systems can run on their own clusters, Storm also runs on Mesos, while Spark Streaming runs on both YARN and Mesos.

|

| Storm vs. Spark Streaming: production and support. |

Summary

Storm has run in production much longer than Spark Streaming. However, Spark Streaming has the advantages that (1) it has a company dedicated to supporting it (Databricks), and (2) it is compatible with YARN.Further Reading

For an overview of Storm, see these slides.For a good overview of Spark Streaming, see the slides to a Strata Conference talk. A more detailed description can be found in this research paper.

Update: A couple of readers have mentioned this other comparison of Storm and Spark Streaming from Hortonworks, written in defense of Storm's features and performance.

April, 2015: Closing off comments now, since I don't have time to answer questions or keep this doc up-to-date.

Subscribe to:

Posts (Atom)